Gary Lucas, rising star of Behavioral Public Choice, turned me on to the work of psychologist Dan Kahan. Highlights from Kahan’s review article on the “Politically Motivated Reasoning Paradigm“, or PMRP:

Citizens divided over the relative weight of “liberty” and “equality” are less sharply divided today over the justice of progressive taxation (Moore 2015) than over the evidence that human CO2 emissions are driving up global temperatures (Frankovic 2015). Democrats and Republicans argue less strenuously about whether states should permit “voluntary school prayer” (General Social Survey 2014) than about whether allowing citizens to carry concealed handguns in public increases homicide rates or instead decreases them…

These are admittedly complex questions. But they are empirical ones. Values can’t supply the answers; only evidence can. The evidence that is relevant to either one of these factual issues, moreover, is completely distinct from the evidence relevant to the other. There is no logical reason, in sum, for positions on these two policy relevant facts–not to mention myriad others, including the safety of deep geologic isolation of nuclear wastes, the health effects of the HPV vaccine for teenage girls; the deterrent impact of the death penalty, the efficacy of invasive forms of surveillance to combat terrorism–to cluster at all, much less to form packages of beliefs that so strongly unite citizens of shared cultural outlooks and divide those of opposing ones. (emphasis mine)

What on Earth is going on?

That explanation is politically motivated reasoning (Jost, Hennes & Lavine 2013; Taber & Lodge 2013). Where positions on some risk or other policy relevant fact has come to assume a widely recognized social meaning as a marker of membership within identity-defining affinity groups, members of those groups can be expected to conform their assessments of all manner of information–from persuasive advocacy to reports of expert opinion; from empirical data to their own brute sense impressions–to the position associated with their respective groups.

If, unlike me, you resist appeals to “common sense,” experiments testing the PMRP are adding up. One example:

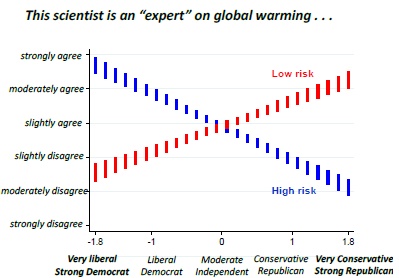

[C]onsider a study of how politically motivated reasoning can affect perceptions of scientific consensus. (Kahan, Jenkins-Smith & Braman 2011) In the study, the subjects (a large, nationally representative sample of U.S. adults) were shown pictures and CVs of scientists, all of whom had been trained at and now held positions at prestigious universities and had been elected to the National Academy of Sciences. The subjects were then asked to indicate how strongly they disagreed or agreed that each one of them was indeed a scientific expert on a disputed societal risk–either global warming, the safety of nuclear power, or the impact of permitting citizens to carry concealed handguns. The positions of the scientists on these issues were manipulated, so that half the subjects believed that scientist held the “high risk” position and half the “low risk” one on the indicated issue. The direction and strength of the subjects’ assessment of the expertise of each scientist turned on out to be highly correlated with whether the position attributed to the scientist matched the one that was predominant among individuals sharing the subjects’ cultural out-looks (Figure 2).

Results, in a single figure:

Some other findings of the PMRP:

High numeracy–a quantitative reasoning proficiency that strongly predicts the disposition to use System 2 information processing–also magnifies politically motivated reasoning. In one study, subjects highest in Numeracy more accurately construed complex empirical data on the effectiveness of gun control laws but only when the data, properly interpreted, supported the position congruent with their political outlooks. When the data properly interpreted was inconsistent with their predispositions, they were more disposed than low numeracy subjects to dismiss it as flawed.

Though I’m admittedly unhappy with Kahan’s interpretation:

These data, then, support a very different conclusion from the standard one: politically motivated reasoning, far from reflecting too little rationality, reflects too much.

Why wouldn’t “being rational if and only if it helps your cause” still count as “too little rationality”? The most you could fairly say here is that “a little rationality is a dangerous thing.”

No-stake PMRP designs seek to faithfully model this real-world behavior by furnishing subjects with cues that excite this affective orientation and related style of information processing. If one is trying to model the real-world behavior of ordinary people in their capacity as citizens, so-called “incentive compatible designs”–ones that offer monetary “incentives” for “correct” answers”–are externally invalid because they create a reason to form “correct” beliefs that is alien to subjects’ experience in the real-world domains of interest.

On this account, expressive beliefs are what are “real” in the psychology of democratic citizens (Kahan in press_a). The answers they give in response to monetary incentives are what should be regarded as “artifactual,” “illusory” (Bullock et al., pp. 520, 523) if we are trying to draw reliable inferences about their behavior in the political world.

Professionally, I must confess, I wish Kahan at least cited my Myth of the Rational Voter, which makes many of the same points. But I strive to put such pettiness aside. The more scholars dogpile on the shameless religiosity of the political mind, the better.

READER COMMENTS

Plucky

Dec 12 2016 at 2:31pm

Slightly trolly/devil’s advocate Q: In econ, “rational” is generally defined as approximately “internally consistent with your preferences”. If your actual preferences are such that the only thing you really want is the defeat, humiliation, and degradation of your outgroup, what is (economically speaking) irrational about politically-motivated reasoning?

pyroseed13

Dec 12 2016 at 4:27pm

If these hold true, this doesn’t bode well for fans of “epistocracy,” like you and Jason Brennan.

Hazel Meade

Dec 12 2016 at 4:33pm

This is something that I noticed by reflection very early in college. I realized that I felt influenced to defend positions that I had very little knowledge of simply because it was the doctrinaire position of the political identity I had chosen. And I stopped doing it, and in fact decided to explicitly not identify with either side anymore. I became an independent.

I think a similar kind of reasoning is why increasing numbers of people identify as independents. However, the people who do NOT identify as independents are susceptible to this influence. If you identify as a Republican and a Democrat attacks Republicans as unscientific on global warming, you’re going to feel compelled to defend Republicans. So you’re going to try to argue the Republican position, which is skeptical on global warming. This is even if you otherwise would not have taken a position on global warming at all. Tribal affiliations make you want to defend your tribe.

Jason Brennan

Dec 12 2016 at 5:01pm

pyroseed13, check out my book. I talk about Kahan in it. This isn’t new to me.

Graham Peterson

Dec 12 2016 at 6:11pm

Most of this research starts with a bad premise and runs with it. The question is “why don’t most people reason the way 18th century positivists and their descendants figured they ought to?” The question should be: “what reason do people have to reason at all without motivation to do so? And is that a bad thing in a world of competing motivations?”

Nathan Taylor

Dec 12 2016 at 7:14pm

You said: “Professionally, I must confess, I wish Kahan at least cited my Myth of the Rational Voter, which makes many of the same points.”

I would argue that Myth of Rational Voter takes a view of human cognition as having built in heuristics or biases that are not true. So the world view of Khananmen and Tversky. This is especially important for economics. For example human intuition is zero sum, and getting over this bias is pretty much the first step to understanding economics. So the division of labor, Ricardo, Adam Smith pin factory, lump of labor.

I would argue Dan Kahan’s work is built around a view of human nature which is tribal. So cultural cognition (as he calls it) is a tool for analyzing in group/out group dynamics.

Both are true of course.

But I think tribal mindset mode of analysis (Kahan) is a far better for understanding political conflict, while the Khananmen view is best for understanding economics.

Do not think we disagree here. And of course you have some of the tribal biases in your book, and are aware of this. But I would say that since Kahan is coming more from a Jonathan Haidt than a Khananmen frame, then even if he had read your book it’s not clear how much overlap there would be. The underlying analysis models are just too different. Even if some topics overlap, and both of you are aware of tribal bias versus heuristic bias.

A good example of tribal analysis mode versus cognitive bias analysis mode is that for non-sacred debates, more info is good, and can change minds. How much does it cost to make an iPhone? But for politicized sacredness, more info challenges your group identity, so more info locks in your beliefs harder. Kahan’s written a lot about global warming in this context. Likely you saw his charts on this. In fact, Ezra Klein did a very nice write up on this a couple of years ago. So if you missed that, worth a look. http://www.vox.com/2014/4/6/5556462/brain-dead-how-politics-makes-us-stupid

mbka

Dec 12 2016 at 10:15pm

Bryan,

all this work and the insights behind it go back to Mary Douglas’ Grid and Group theory and the associated worldviews, later developed into Cultural Theory with Aaron Wildavsky, and Michael Thompson. It’s also called “cultural theory of risk”.

Example, see this link.

So this has been around for decades, but as you say, a good thing that it is finally becoming mainstream.

Peter Gerdes

Dec 13 2016 at 6:54am

Just to be a bit pedantic the answers people give in response to monetary incentives aren’t illusory they just reflect dispositions/behavior that is less common.

Those answers certainly indicate how people behave when they have reason to convey the academic orthodoxy. I suspect they may even also track how people behave in the rare cases where they have a direct (and fairly simple) financial stake in the outcome. For example how they react if they were charged with pricing flood insurance…or considering the value of such insurance to them.

They just aren’t the answers that are relevant to daily behavior or voting.

Thaomas

Dec 13 2016 at 12:38pm

I’m not entirely persuaded. Evidence based positions [mine :)] seem to be on both (or neither) sides of many debates.

Anti “Progressive:

Nuclear and geological storage of nuclear wastes can be safe.

GMOs are safe.

Fracking can be safe.

FDA approves drugs that have little health benefits.

Anti “Conservative”:

CO2 accumulation in the atmosphere is harmful

HPV is safe for girls AND boys.

Concealed carry is unproven as increasing public safety.

Death penalty as a deterrent is unproven.

Significant unemployment effects of modest increase in the minimum wage is unproven.

Picture ID as a prevention of voter fraud is unproven.

FDA fails to approve drugs because of being too risk averse.

Andrew_FL

Dec 13 2016 at 1:53pm

How the heck do you figure this is “Anti “Conservative””?

Musca

Dec 13 2016 at 3:07pm

Isn’t there some question-begging going on here, or at least a smuggled assumption?

Do most people recognize a split between deontological and consequentialist reasoning? Or, to be clearer, don’t most people axiomatically assume that the moral course is identical to the one which leads to the most beneficial outcomes?

This should be testable. In other words, could you devise experiments to determine the amount of agreement with the following statement: “if you believe in policy X, would you continue to support X even if it leads to worse outcomes for the majority of people?”

Personally, I would have a hard time actively supporting the idea of, say, a single-payer health care system even if aggregate health (however measured) was noticeably better. After all, single-payer is just wrong…

Thaomas

Dec 13 2016 at 6:05pm

Good catch, Andrew.

Comments are closed.