Biologist Paul Ehrlich’s recent appearance on 60 Minutes drew an immediate response, with a deluge of denunciations of his decades spent peddling baseless scare stories. Ehrlich responded, Tweeting:

Ehrlich’s invocation of “peer review” is notable. Notice how he conflates this process with the practice of science itself.

But Ehrlich is wrong. As Adam Mastroianni, a postdoctoral researcher at Columbia Business School, noted in a recent article, peer review—where “we have someone check every paper and reject the ones that don’t pass muster”—is only about 60 years old:

From antiquity to modernity, scientists wrote letters and circulated monographs, and the main barriers stopping them from communicating their findings were the cost of paper, postage, or a printing press, or on rare occasions, the cost of a visit from the Catholic Church. Scientific journals appeared in the 1600s, but they operated more like magazines or newsletters, and their processes of picking articles ranged from “we print whatever we get” to “the editor asks his friend what he thinks” to “the whole society votes.” Sometimes journals couldn’t get enough papers to publish, so editors had to go around begging their friends to submit manuscripts, or fill the space themselves. Scientific publishing remained a hodgepodge for centuries.

(Only one of Einstein’s papers was ever peer-reviewed, by the way, and he was so surprised and upset that he published his paper in a different journal instead.)

Peer review’s supposed benefit is “catch[ing] bad research and prevent[ing] it from being published.” But, Mastroianni notes:

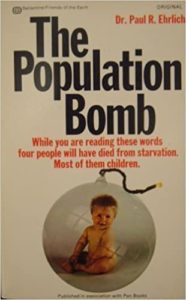

The Population Bomb belongs on the list of peer reviewed junk science.

And there are costs to the process:

By one estimate, scientists collectively spend 15,000 years reviewing papers every year. It can take months or years for a paper to wind its way through the review system…And universities fork over millions for access to peer-reviewed journals, even though much of the research is taxpayer-funded, and none of that money goes to the authors or the reviewers.

Huge interventions should have huge effects…if peer review improved science, that should be pretty obvious, and we should be pretty upset and embarrassed if it didn’t.

It didn’t. In all sorts of different fields, research productivity has been flat or declining for decades, and peer review doesn’t seem to have changed that trend. New ideas are failing to displace older ones. Many peer-reviewed findings don’t replicate, and most of them may be straight-up false. When you ask scientists to rate 20th century discoveries in physics, medicine, and chemistry that won Nobel Prizes, they say the ones that came out before peer review are just as good or even better than the ones that came out afterward. In fact, you can’t even ask them to rate the Nobel Prize-winning discoveries from the 1990s and 2000s because there aren’t enough of them.

A recent article in Nature is titled ‘‘Disruptive’ science has declined — and no one knows why,’ but Mastroianni may be giving us at least some of the answer:

Researchers are as responsive to incentives as anyone. The peer review process incentivizes “gaming,” with people looking to satisfy reviewers and run up their publications rather than break new ground. The costs of peer review, it seems, do not outweigh the benefits. It ought not be a straightjacket for new research nor a shield for charlatans like Ehrlich.

READER COMMENTS

Thomas Lee Hutcheson

Jan 25 2023 at 4:57pm

Peer review is fine. The problem is making peer reviewed papers too important in decisions of tenure and promotion. That should give more weight to teaching, mentoring, research collaboration, public science, non-peer-reviewed papers, etc.

William Connolley

Jan 26 2023 at 4:33am

You are unwise to take PE’s assertion that tPB was peer-reviewed at face value. Science books are rarely peer-reviewed, and still less so pop-sci books; mostly likely, this is simply another error on PE’s part.

Meanwhile, your attempt to assess the effects of peer review is broken, because you don’t have a comparison group: peer review is so widespread, this is inevitable. Instead, you should try thinking: if something is so widespread, perhaps it does have some benefits? Blaming the loss of “disruptive science” on peer review is weird.

Jon Murphy

Jan 26 2023 at 6:09am

What do you mean? There is a comparison group: the pre-1960s system of publication. That’s the comparison used.

Knut P. Heen

Jan 26 2023 at 6:08am

There is always a danger that a peer-review system develops into a guild in which the guild members get their stuff published while outsiders with different ideas do not. However, the bigger problem seems to be that too many people think that the findings in a peer-reviewed paper necessarily must be correct. Suppose non-peer-reviewed articles are correct at a frequency of 1 in a billion. Suppose per-reviewed articles are correct at a frequency of 1 in a thousand. The peer-review process improved quality quite a bit, but almost all articles are still incorrect.

Jon Murphy

Jan 26 2023 at 6:12am

I was just coming here to say the same thing. Even Ehrlich makes that mistake. He appeals to just about everything except results.

Warren Platts

Jan 28 2023 at 2:15am

Ehrlich is not a charlatan. He’s merely pointing out the ecological truism that biological populations can’t increase forever.

Jon Murphy

Jan 28 2023 at 6:01am

He is a charlatan. He is not pointing out a truism that populations cannot grow forever. He made specific claims that the population was already too big and mass starvation was less than a decade away. He predicted (and bet) that resources would be more expensive in the 90s. He was wrong. He continues to be wrong. His insistence he is right because he was “peer reviewed” is unscientific and irrelevant.

I don’t know what to call someone who repeatedly insists they’re right when the data constantly refute them and who rely on obfuscation to “prove” it other than charlatan.

If all he’s doing is repeating a truism, then I guess he’s no different from the doomsday soothsayer downtown who shouts ” Judgement Day is coming!” Sure, maybe he’s been wrong predicting the End of Days so far, but he’s merely repeating the truism that the Sun will eventually expand and swallow the Earth. Just because it didn’t happen in 2022 like he said doesn’t prove him wrong.

Or maybe he’s no different then the fortune teller at the Winter Fest who said I faced death last weekend. She wasn’t playing a part for entertainment. She was merely stating the truism that I will perish someday. The fact she said last weekend doesn’t mean anything. Eventually she’ll be right.

Warren Platts

Jan 28 2023 at 12:10pm

From one of the links above:

Here, Paul makes it quite explicit famines can be avoided in the short run if we goose the carrying capacity of the Earth far enough, but he is entirely correct that such programs can only provide a stay of execution. And he’s right: no amount of technology can sustain a growing population forever.

Jon Murphy

Jan 28 2023 at 12:18pm

Again, he’s talking about a decade or two. Read the Population Bomb (or the interview, for that matter).

Look, either the man is a charlatan or he is not making any contribution to science and understanding (merely repeating a truism). Either options are not good for Ehrlich, and both suggest he is undeserving of the scientific laudes he has gotten.

Jon Murphy

Jan 28 2023 at 1:36pm

Additionally, I should point out that, as a factual matter, the whole “stay of execution” is incorrect. As a scientific matter, there is no reason to think Mankind will mindlessly consume all resources and then just fade away into extinction. That was the whole point of Julian Simon’s bet. Humans are amazingly intelligent in a way Ehrlich and his followers do not understand.

Warren Platts

Jan 28 2023 at 2:48pm

That bet was fun, but pretty much meaningless. I’ll bet you if you look at the metals prices as of today, Ehrlich would have won the bet!

Jon Murphy

Jan 28 2023 at 4:18pm

Fun fact: People have been tracking, and for every decade, Ehrlich would have lost.

It’s a direct test of Ehrlich’s thesis, which is why he was willing to put $10k on it. If it was meaningless, you’d better tell him that.

Warren Platts

Jan 29 2023 at 3:48am

You’re shooting from the hip again, Jon. I just took a quick look at zinc and copper charts. They are up since the 90’s, even adjusting for inflation. Here’s one for “All Commodities”. Also up way higher than the rate of inflation.

Global Price Index of All Commodities (PALLFNFINDEXM) | FRED | St. Louis Fed (stlouisfed.org)

Jon Murphy

Jan 29 2023 at 8:48am

You don’t know what the terms of the bet were, do you?

Jon Murphy

Jan 29 2023 at 9:02am

I’m not going to get into the basics of the bet since you believe it to be meaningless. If you want to die on a meaningless hill, who am I to stop you?

Warren Platts

Jan 29 2023 at 11:50am

Simon got lucky. Interesting article on the subject by Tim Worstall here:

But Why Did Julian Simon Win The Paul Ehrlich Bet? (forbes.com)

Jon Murphy

Jan 28 2023 at 8:43am

As much as I’d like to blow up the whole peer review system, it does have merits. As Thomas says above, there is an incentive problem. Until that gets fixed, I’m not sure any peer review or substitute system will really be helpful.

What makes reform more difficult, as Knut points out, is the mythos that surrounds peer review. Like Ehrlich, many people treat peer review as a statement on the accuracy and truth of the article. But even setting aside the issues you raise in this article, it doesn’t hold peer review is a search of truth. My philosophy when I review is that discussions should take place in the pages of the journals and at conferences, not behind closed doors in the peer review process. I look to see if the argument makes sense, not whether I’m convinced by it. I’ve recommended accept for papers I disagree with and reject for papers I’ve thought were probably correct.

I will say that the process can improve manuscripts greatly. I’ve gotten two pubs at what my university considers A level journals. In both cases, the peer reviewers have made the manuscript vastly superior than what I submitted. And even rejections (with one exception) has lead to better papers thanks to reviewer comments.

Regardless, the whole system does need massive reform. I just don’t know how that’ll come about. Academia can be extraordinary conservative when it comes to changes, especially when those changes threaten status.

Warren Platts

Jan 28 2023 at 2:13pm

The main reason for peer review is to serve as a sort of “population control” given that the number of papers keeps expanding hyper-exponentially. Probably the reason there is less “disruptive” science is not because of peer review: it’s simply because science is doing its job of approximating reality. That is, we’ve reached the point of diminishing returns. There is simply nothing revolutionary left to be discovered. In Thomas Kuhn’s terms, we’ve entered a plateau of endless “normal science.” The science writer John Horgan wrote a book in the early 1990’s entitled The End of Science where he made the claim there’s just nothing much left to be discovered, certainly nothing Darwin or Einstein-level. His book has held up pretty well, imho. Better anyways than Fukuyama’s End of History thesis! I can think of maybe one scientific revolution in the meantime that counts: possibly the discovery that the universe is expanding at an accelerating rate.

Jon Murphy

Jan 28 2023 at 4:21pm

These sorts of predictions age like milk. I feel like every few years, someone comes along and says we have reached the pinnacle…Just as we blow past the supposed peak.

Warren Platts

Jan 29 2023 at 3:55am

Honestly, Jon, let’s just take a look at economics, your specialty. Ricardo’s comparative advantage, the Law of Demand, are these sorts of theories in any danger of being proven false? When was the last genuinely surprising result obtained that was remotely comparable to the discover that the universe is expanding at an accelerating rate?

Jon Murphy

Jan 29 2023 at 9:00am

Given you keep asserting that they are false, at least you think so.

Well, that sort of judgment is subjective, so I won’t argue with you whether or not you agree with the potential impact.* Personally, I think the work on complexity and network economics is comparable (although, I’ll admit, I am biased as it is my field of research). Experimental economics, behavioral economics, Law & Economics, and Public Choice were all major advancements. All of them caused major revolutions in thought and policy.

Expanding beyond economics, I think the recent advances in cold fusion (if they hold) are huge. mRNA vaccines have the potential to be a major advancement in medical science. If we an get regulations out of the way, I think there is substantial potential for new energy developments. Indeed, I think if we can get regulations out of the way, we could have major advances in space travel and mining.

I’ve said it before and I’ll say it again: I believe humanity is on the cusp of a new Golden Age. The distinctly illiberal turn of the world of the past 7 years has reduced that hope in me, but I am not ready to abandon it. If we do enter a period of scientific stagnation, it’ll be because of bureaucratic repression, rather than Humanity having achieved all knowledge possible.

*As an aside, if the Universe is expanding at an accelerating rate, doesn’t that fact alone disprove Ehrlich’s truism?

Warren Platts

Jan 29 2023 at 12:01pm

No, it entails we’re all going to die a lot sooner than previously thought! As for Ricardo’s comparative advantage, everyone believes in it, including me (although the theory is severely weakened by the assumption that workers are interchangeable cogs). As for space mining, here is a minor contribution of my own:

Prospecting for Native Metals in Lunar Polar Craters | AIAA SciTech Forum

Jon Murphy

Jan 29 2023 at 2:08pm

Which makes one wonder why you’ve spent much of the past 7 years denouncing it and, as recently as last week, saying that it cannot explain trade in the real world. But I digress…

My main point is that stagnationists seem to always make their “we’ve reached the peak” claims just as another Industrial Revolution is about to begin. If the same pattern holds, then I may need to upward revise my estimates of the coming years.

Warren Platts

Jan 29 2023 at 4:58pm

I do not denounce Ricardo’s theory. That’d be like denouncing Charles Darwin’s theory. What I denounce is the economic equivalent of using Darwin’s theory as some sort of a justification for eugenics. Destruction is not always creative..

Jon Murphy

Jan 29 2023 at 5:27pm

Well, I’ll let the record speak for itself on that one.

Agreed. That’s why I explicitly reject any from of centralized planning, whether it be “industrial” or whatever. Mere mortals attempting to play God; it’s just a different from of eugenics.

Comments are closed.